1. Every boardroom in India has an AI strategy today. And yet the gap between what organisations say they are doing with AI and what is actually happening on the ground has never been wider. In your experience, what is the real constraint — and why are most leaders looking in the wrong direction for it?

Most organisations today have AI strategies, but very few are becoming truly AI native. The gap exists because AI is often adopted defensively—driven by urgency and fear—rather than as a redesign of the business itself. Many leaders simply layer AI onto existing processes, creating pilots instead of transformation. The real shift begins when organisations ask how they would rebuild their operating model if they were AI native from day one. AI’s true value lies not in cost reduction, but in augmenting human capability and rethinking the entire value chain. Without responsible governance and long-term vision, AI scale becomes a risk, not an advantage.

2. Your core philosophy is that innovation is hidden in constraints, and constraints are hidden in data. When you look at an organisation's data landscape for the first time, what does it reveal about the organisation's culture and leadership that no annual report ever would?

An organisation’s data landscape is a mirror of its leadership and culture. Fragmented systems, duplicated data, and unclear ownership signal decision-making debt and short-term thinking. When data has no clear steward, it reflects a culture that treats data as a by-product rather than a strategic asset. Highly centralised data teams often indicate control-driven environments, while mature organisations move toward federated ownership with governance built into systems. Ultimately, strong data quality, discoverability, and trust reflect leadership maturity. Data doesn’t just show how systems work—it reveals how decisions are made, priorities are set, and whether collaboration truly exists.

The human constraint

3. AI adoption fails most often not because of bad technology but because of people — fear, inertia, hierarchy, and habit. What are the most common human constraints you encounter inside organisations, and what do they tell you about what that workplace actually values versus what it claims to?

AI adoption fails more often due to human factors than technology. Fear of losing relevance, authority, or stability creates resistance, especially when AI is perceived as a cost-cutting tool. This leads to silent pushback and underused investments. In contrast, organisations that frame AI as augmentation—helping people work smarter, faster, and better—see stronger adoption and momentum. What organisations truly value becomes visible here: those focused only on short-term efficiency struggle, while those investing in people and capability building unlock AI’s real potential. AI succeeds when employees see it as an enabler of growth, not a threat.

4. Middle management is often where AI transformation quietly dies — caught between a top-down mandate and a ground-level workforce that hasn't been brought along. How do you read that constraint, and what does it signal about the organisation's real culture of trust and communication?

AI transformation often stalls in middle management, where strategy meets execution. This group translates vision into action, yet is frequently under-supported and under-empowered. Organisations often communicate what is changing, but not why it truly matters, resulting in passive alignment rather than ownership. Middle managers need clarity on purpose, guidance on execution, and confidence in success metrics. When they disengage, it signals deeper cultural issues—limited trust and top-down change. Organisations that succeed treat middle management as transformation multipliers, investing in their capability and involving them early. Strategy sets direction, but middle management determines momentum.

5. India has a deeply hierarchical professional culture in many sectors — where questioning a decision, flagging a failure, or surfacing bad data is still a career risk. How does that cultural dynamic show up in the data, and what does it cost organisations that refuse to confront it?

In hierarchical cultures where questioning is discouraged, the smartest employees eventually stop speaking up. This cultural constraint becomes visible in the data: unresolved quality issues, hidden risks, and workarounds instead of fixes. Over time, poor data foundations lead to biased AI outcomes, weak decisions, and low trust in insights. These failures are often misattributed to execution rather than culture. Organisations that thrive encourage fact-based dissent and challenge. True collaboration means empowering voices at all levels. When questions are silenced, signals are lost—and in the AI era, ignored signals quickly become amplified risks.

The structural & cultural constraint

6. You have written and spoken about inclusion as a business and data imperative, not just a social one. When cultural constraints are embedded in an organisation's data and processes, how does that affect the quality of the AI it builds and the decisions it makes?

Inclusion is not just a social goal—it is essential for data quality and decision intelligence. Cultural constraints inevitably get embedded into data and models, and AI scales these patterns. Non-inclusive environments produce narrow datasets, biased outcomes, and heavier governance overheads. In contrast, inclusive cultures naturally generate richer, more representative data, improving model reliability and insight quality. Diverse perspectives reduce blind spots and improve problem framing. Inclusion must be built across people, processes, data, and AI systems—not retrofitted through compliance. The quality of AI is directly shaped by the diversity of thought behind it and the inclusiveness of the data it learns from.

7. Many Indian organisations have adopted Western AI frameworks and tools wholesale — without accounting for the cultural, linguistic, and structural realities of their own workforce and customer base. What goes wrong when you ignore the local constraint, and what opportunity does it represent when you don't?

Global AI frameworks accelerate innovation, but problems arise when they are adopted without adapting to local realities. Models trained on Western-centric data often struggle with India’s linguistic, cultural, and behavioural diversity, leading to poor user experience and ineffective outcomes. The opportunity lies in blending global frameworks with local data, domain knowledge, and human feedback. Techniques like fine-tuning and RAG allow models to become locally intelligent while retaining global scale. Organisations that succeed won’t choose between global and local—they will integrate both. AI becomes powerful when global intelligence is grounded in local context.

8. There is a pattern you see repeatedly — organisations investing heavily in AI infrastructure while their data culture remains broken. Silos, politics, distrust, poor data hygiene. What is that gap really telling leadership about the organisation they have built?

Heavy investment in AI infrastructure alongside broken data culture signals technology ambition without organisational readiness. Infrastructure is easier to fund and showcase, while data culture requires structural change—breaking silos, redefining ownership, and aligning incentives. Conway’s Law applies: fragmented teams produce fragmented data. Strong data cultures are built through federated ownership, embedded governance, and quality by design. When AI is treated as a technology program rather than an organisational transformation, intelligence fails to scale. AI doesn’t fail due to lack of compute—it fails when trust is low, data is fragmented, and accountability is unclear.

The signal

9. You say constraints are where innovation hides. Can you give us a real example — without naming names — where an organisation's most frustrating constraint turned out to be the signal that unlocked its most significant leap forward?

A common constraint I’ve seen is data access latency becoming the biggest barrier to innovation. While applications modernised rapidly, delivery slowed because centralised data teams controlled access. Simple data requests took months, stalling experimentation and business agility. The breakthrough came from recognising this as an operating model problem, not a technical one. By moving to federated data platforms with self-service access and governance built into pipelines, teams were empowered to move faster without compromising control. Data access timelines dropped dramatically, experimentation increased, and innovation accelerated. What appeared as a bottleneck was actually a signal to optimise for flow, not control.

10. For a senior leader who is not a technologist — a CEO, a CFO, a CHRO — what is the one constraint in their organisation's AI journey they should be paying the most personal attention to right now? And why is it almost certainly a people or culture problem wearing a technology mask?

The most important constraint leaders should examine is whether they are rethinking how the organisation works—or simply adding AI to existing models. The real question is how the business would be designed today in an AI-native world. This exposes deeper issues: rigid hierarchies, slow decisions, siloed teams, and risk-averse cultures. These are people and culture problems wearing a technology mask. AI amplifies whatever already exists—strengths or weaknesses. Leaders must assess how comfortable the organisation is with change across all levels. AI transformation is not about smarter systems, but about building more adaptive organisations.

Looking forward

11. India is generating data at a scale the world has never seen — across languages, geographies, income levels, and behaviours. What does responsible, culturally intelligent AI adoption look like for an Indian organisation that wants to lead, not just follow?

India is generating one of the richest and most diverse data ecosystems in the world — across languages, geographies, income groups, and digital behaviours. This presents a powerful opportunity, but also a profound responsibility.

Responsible, culturally intelligent AI adoption in India begins with trust. With frameworks like the Digital Personal Data Protection Act, 2023 shaping the regulatory landscape, organisations must treat privacy, consent, and transparency as foundational — not compliance checkboxes. Data must be protected, anonymised where required, and used with clear purpose and accountability.

Equally important is cultural intelligence in data and models. Indian users interact in multiple languages, mixed dialects, and hybrid digital behaviours. AI systems must be trained and evaluated with representative datasets to avoid exclusion, bias, or misinterpretation. This requires intentional investment in multilingual datasets, inclusive design, and continuous monitoring of outcomes.

Responsible AI must also be built into architecture by design — strong access controls, data masking, lineage tracking, auditability, and governance embedded into pipelines rather than layered later. When data is treated as a precious asset, security, quality, and ethical use become organisational habits, not isolated initiatives.

Finally, leadership alignment is critical. Board-level oversight, governance frameworks, and regular audits help ensure AI systems remain fair, secure, and aligned with customer interests.

India’s scale and diversity are not just challenges — they are a global advantage.

Organisations that build culturally intelligent, responsible AI here will not only lead locally — they will help define how inclusive AI is built for the world.

12. If the constraint truly is the signal — what is the most important signal that Indian corporate culture is sending right now about its readiness for the AI decade ahead? And are leaders reading it?

The strongest signal Indian corporate culture is sending right now is urgency without uniform readiness.

AI experimentation is accelerating faster than organisational transformation. Many organisations are launching pilots and platforms, but data ownership, governance, and decision structures are still catching up. A positive shift is emerging—from cost efficiency toward value creation and new business models. The real question is whether leaders are reading the full signal. AI readiness will not be measured by how much AI is deployed, but by how organisations adapt culturally and structurally. India has the talent, data, and ambition to lead the AI decade—the deciding factor is whether leaders move from adoption to re-invention.

Disclaimer: The views expressed in this article are solely those of the author and do not represent the views of the author’s organization.

--Ends--

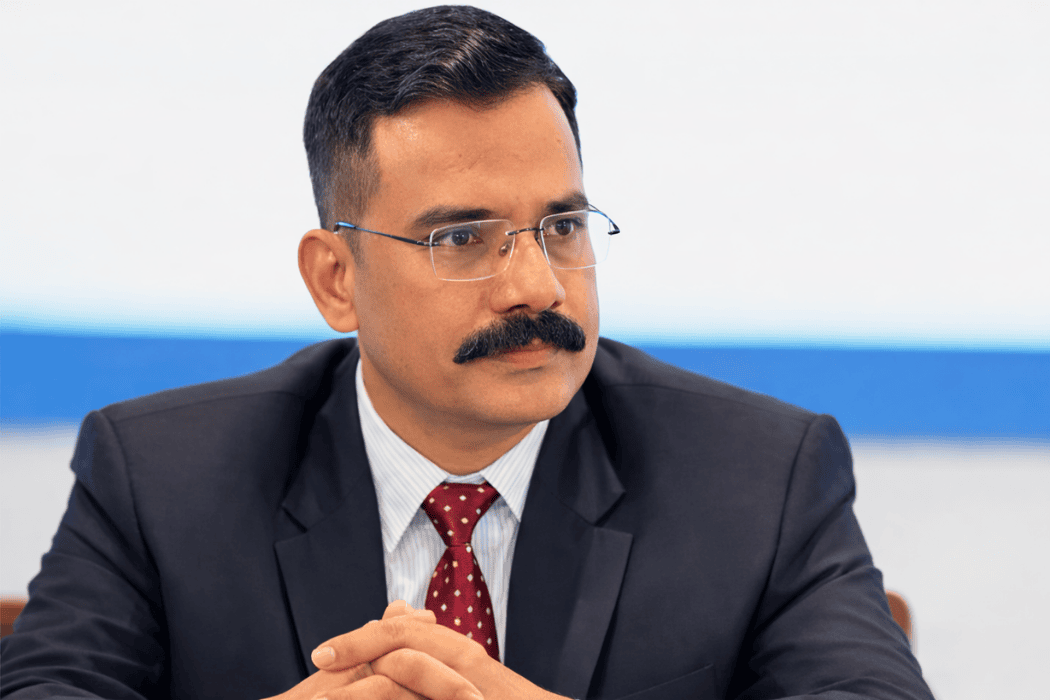

Sheetal Pratik is a Data Leader who has enabled organizations to deliver business values from data. With around 22+ years of experience in data related technologies at large organizations and startups, she has held various roles architecting and delivering data platforms across companies such as Oracle, Colt, NaviSite, Syntel, Mphasis, Reliance Jio Payments Bank, Saxo Bank and Adidas.

Currently she is working as a Director Data Engineering, Data and Analytics at NatWest as a Principal Engineer.

She holds a BE degree with First Class Distinction, from BIT Mesra in Electronics and Communication and is also an MBA from IMT Ghaziabad.

She has authored two research papers on data mesh and her paper on “Data Mesh Adoption: a Multi-Case and Multi-MethodReadiness Approach” has won the Best Overall Paper award in December 2023, at EMCIS (European, Mediterranean and Middle Eastern Conference on Information Systems) The British University in Dubai.

She was interviewed for her work in 2022 by Harvard Business Review on ‘Creating Business Value with Data Mesh’ for rolling out one of the early federated data governance platforms.

In 2022, she received Indian Achievers Award 2022 by Indian Achievers Forum.

.png)

.jpg)