So here's the question I want you to sit with before we go any further. What if AI gave you some of those hours back? Not to do more work. But to actually choose.

That's the conversation I think we should be having. Not 'will AI replace your job.' But what will you do with the bandwidth it creates? I've spent 15 years watching technology reshape how people work, across gaming, edtech, and now building multilingual voice AI for physical devices. And the pattern I keep seeing is this: the tool was never the threat. The real disruption was always to our definition of what good looked like.

I've seen this movie before. Twice.

In gaming, we used to celebrate the complexity of NPC behaviour. The more intricate the routine, the more immersive the experience felt. What I understand now, looking back, is that those procedural NPC routines were early, constrained versions of autonomous AI agents. We just didn't have the language for it yet.

And dialogue trees in RPGs, the branching choices that shaped how a story unfolded, those weren't just game design. They were early prompt engineering. The way a player phrased their intent changed the outcome within a constrained model. We were training ourselves to communicate with AI before AI was ready to listen properly.

The shift happening now in product development mirrors what happened then in game design: the boundary between who can build and who can only imagine is dissolving. In gaming, we saw product managers start simulating level generation and player behaviour themselves, putting their hypotheses directly into tools, validating them before they ever reached a developer. Their instincts got sharper. Their briefs got better. The feedback loop shortened in ways that changed the quality of every decision downstream.

Edtech showed me the other side of this. When AI entered learning management systems, the goal wasn't to replace teachers. It was to preserve them at scale. The most effective implementations I saw were trained on how actual teachers explained things: the tone, the analogies, the way a good professor would re-approach a concept when a student didn't get it the first time. When a student asked the same question ten different ways, the AI responded with the same naturalistic quality. Not a database lookup. Something closer to genuine teaching instinct. The soul of the educator, retained and multiplied.

Dialogue trees in RPGs weren't just storytelling. They were early prompt engineering. We were training ourselves to communicate with AI before AI was ready to listen.

Fifteen years on, I'm building voice-first AI agents for physical devices. The problems are harder: real-time, multilingual, no screen to fall back on, edge hardware with real constraints. But the underlying question is identical to the one I faced in gaming and edtech. Where does the machine stop and the human begin? And who decides?

Three kinds of people in every room right now

When I look around at how people are relating to AI, at work, in their industries, in the discourse online, I consistently see three types.

The first group genuinely understands what AI can and cannot do. Not theoretically. Practically. They're using it to move faster, think sharper, and produce work that would have been impossible for one person to produce alone. Unsurprisingly, they're the ones quietly pulling ahead.

The second group is using AI but misusing it. They've handed over the wheel. Ask AI, accept what it says, ship what it generates. No interrogation, no editing, no judgment applied. The output looks plausible on the surface, until it causes a real problem. These are the people who will get burned, and who will blame the tool when they do.

The third group has decided AI will make us intellectually dependent and professionally soft. They're forming strong opinions from the sidelines, mostly watching.

There was a time when running 100 metres in under 10 seconds was considered biologically impossible. Then someone did it. Humans don't plateau. We recalibrate what's possible.

I don't have patience for that third group's pessimism. Not because the concern is entirely wrong, but because it's ahistorical. Every significant tool has attracted the same fear: writing, the printing press, the calculator, the internet. Each time, humans didn't weaken. We got more capable, raised the benchmark, and moved on. AI is not the end of human intelligence. It's a revolution in how we deploy it.

When execution gets cheap, thinking becomes the scarce resource

Here's something concrete. Not long ago, running a proof of concept required real resources: engineers, weeks, stakeholder alignment, prioritization fights. Most ideas didn't survive that gauntlet. They died in backlogs, untested.

Today, a hypothesis I have at 10am can be built, run, and evaluated by 2pm. The build-measure-learn cycle, the heartbeat of any product team, has compressed at every layer: integration, application, experience. What used to take a quarter can be stress-tested in a week.

But here's what that actually means, and I don't think it's been fully absorbed yet. When execution becomes cheap, thinking becomes the expensive thing. The constraint has moved. It's no longer 'can we build this?' It's 'are we even solving the right problem?'

I've started spending more time falling in love with the problem before I touch a tool. The human need, the psychology, the friction point, understood deeply before anything gets built. That shift in where I invest attention is, I think, the single most important professional adaptation available right now. The people who will compound aren't the fastest executors. They're the clearest thinkers.

And this changes what a good team member looks like. The old signal was output volume: PRs merged, decks delivered, tasks closed. The new signal is the quality of the question being asked before any of that starts. Problem framing over problem solving. 'Are we even solving the right thing?' asked early enough to actually change the answer.

What AI Gave Me Options On. And What I Had to Decide Myself.

II want to be specific here, because vague wisdom about AI judgment is everywhere. Concrete examples are rarer.

When evaluating pipelines for a voice AI experience, multilingual, agentic, running on a physical device, the model consistently recommended deep personalization. Store everything, learn aggressively, optimize continuously. And technically it was right. On every benchmark that matters, that approach wins.

But users don't live in benchmarks. What the model couldn't feel was the difference between personalization that empowers and personalization that unsettles. Users don't mind being understood. They mind being tracked without feeling in control. That distinction is entirely human. The model doesn't experience micro-frustration. It doesn't register trust erosion over three sessions. It doesn't know what it feels like when an interface seems to know too much.

AI optimizes for correctness. We have to optimize for human perception. Those are not the same thing, and confusing them is how products quietly fail.

Some of the most consequential calls I've made recently were ones where AI surfaced three strong directions and I chose one for reasons that had nothing to do with which option scored highest. What matters more: perceived intelligence or real-time responsiveness? Ecosystem growth speed or quality control? These aren't optimization problems. They're judgment calls. And someone has to own them.

Mediocre work sounds like: 'AI suggested this, so I went with it.'

Strong work sounds like: 'AI gave me three directions. I chose this one, and here's precisely why.'

That 'here's why' is where your professional value lives now. Everything else is being compressed.

GenX, Millennials, GenZ. Three Different Relationships With the Same Shift.

The generational dynamics here are real, but they're more nuanced than 'digital natives get it, everyone else is catching up.'

GenZ entering the workforce today has grown up with AI tools, using them for assignments, research, side projects, before most organizations had policies about them. They're not waiting for training. They're already running personal workflows that their managers can't see. The risk isn't recklessness. The risk is that without organizational guidance, they don't develop the framework for knowing when AI assistance is appropriate and when it isn't. That's a leadership gap, not a generational flaw.

Millennials, who lived through the mobile and cloud transitions and carry a decade or more of professional scar tissue, are often the most effective AI users I encounter. They have enough domain depth to catch errors, enough technical fluency to iterate quickly, and enough professional confidence to push back when something feels wrong. They're the ones most likely to develop genuine AI judgment, not just AI familiarity.

GenX leaders face a different and underappreciated challenge. Many built their credibility on expertise accumulated over decades, deep knowledge that AI can now simulate, at least superficially, in seconds. The ones navigating this well are leaning hard into what AI cannot replicate: contextual judgment built from real failures, organizational relationships that took years to earn, the ability to read a room in ways no model can. The ones struggling are those whose professional identity is too tightly wound around the specific knowledge they accumulated, rather than the wisdom underneath it.

Across all three groups, the organizations getting this right are the ones creating explicit space to talk about it. Not as a policy exercise, but as a real conversation about how work is changing and what it means for how people grow.

India's Particular Advantage in This Shift

We've always operated under constraint. Resources, infrastructure, time: none of it has ever been abundant in the way it is in well-funded Western ecosystems. And that constraint has produced something genuinely valuable, a reflex for doing more with less. Finding the elegant workaround. Shipping when conditions aren't perfect, because conditions are never perfect.

That instinct is exactly what effective AI use requires. The best AI users I know aren't the ones with the biggest tool budgets. They're the ones who extract maximum value from what's available, iterate without waiting for permission, and don't confuse access to tools with the ability to use them well.

What's even more significant is that geography is ceasing to be a barrier in ways it never quite has before. A developer in Nagpur, a designer in Coimbatore, a product thinker in Jaipur, with the right tools and the right disposition, they can produce work that competes with anyone, anywhere. The infrastructure gap that once separated tier 1 from tier 2 talent is collapsing. What remains is curiosity and judgment. Those have never been a city's monopoly.

India's AI-native startups are already proving this. Small teams shipping what once required organizations ten times their size, because they've built AI leverage into how they work from day one. The larger IT firms face a different challenge: client contracts that restrict AI tool usage, compliance obligations that slow adoption, and the organizational weight of scale. Engineers at these firms are navigating a two-speed reality, governed at work and AI-augmented in their own time. That divergence will matter more than most people are acknowledging yet.

What Will You Do With the Hours?

Here's where I'll land.

AI is not going to make you redundant. But it is going to make your habits, your thinking patterns, and your relationship with your own judgment visible in ways they weren't before. The person who accepts AI output without interrogating it will plateau. The person who uses AI to think harder, not just work faster, will compound.

Across fifteen years and three industries, the professionals I've watched grow through every technology shift share one trait: they stayed genuinely curious about the problem, and genuinely skeptical of the first solution. AI doesn't change that formula. It just makes the gap between people who apply it and people who don't, wider and faster.

The 24-hour question still stands. What AI offers, if you use it well, isn't more output crammed into the same hours. It's more room in those hours to think, to choose, to be deliberate about the kind of professional and the kind of person you're becoming.

The revolution isn't in the tools. It's in what you decide to do with the room they create.

That's always been the human part. AI just made it impossible to ignore.

-- Ends--

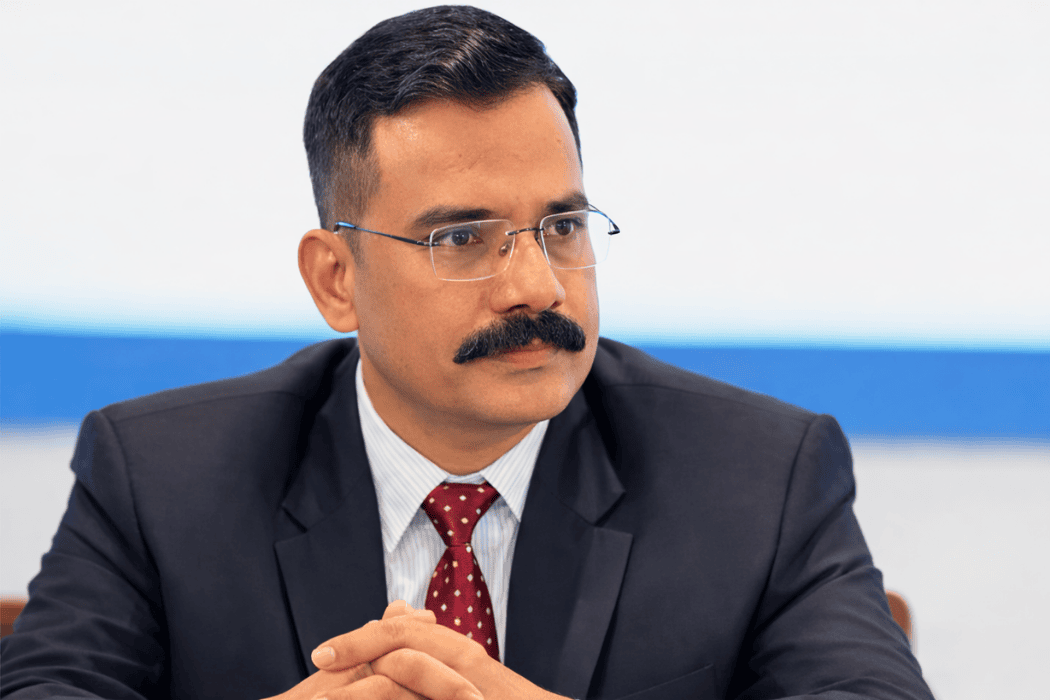

Pranjal is a product and technology leader with 15 years of experience building AI-powered products across gaming, edtech, and intelligent device platforms. He has taken ideas from zero to market across agentic AI systems, voice interfaces, XR platforms, and multimodal AI integrations spanning OS, SDKs, and device hardware. Right now, he is figuring out what it means to lead in a world where AI is changing faster than organisations can keep up.

.png)

.jpg)