Across teams, a familiar pattern is taking shape. A small group of employees uses AI tools daily—drafting faster, analysing quicker, automating repetitive work, and arriving at meetings better prepared. Another group uses them occasionally, cautiously, or not at all. Over time, the output gap widens. Not dramatically at first, but enough to be noticed. And in modern workplaces, visibility matters.

What makes this shift subtle is that it rarely shows up in job descriptions or performance reviews. AI literacy is not yet a formal requirement, but it increasingly influences how work is perceived. The employee who turns around a first draft in minutes appears more responsive. The one who brings data-backed insights appears more strategic. The one who experiments with tools appears more innovative. None of this is framed as “AI skill,” but the advantage compounds.

In startups, this effect is amplified. Lean teams, high pressure, and limited time reward speed and adaptability. Founders and early employees who are comfortable with AI tools move faster by default. They automate operational work, synthesise information quickly, and free up time for decision-making. Those without similar fluency may still perform well, but comparatively slower. In environments optimised for pace, that difference matters.

Corporates are not immune—just slower to acknowledge it. Large organisations often roll out AI tools with broad enthusiasm but limited enablement. Licences are provided, guidelines circulated, and experimentation encouraged in theory. In reality, learning is left to individuals. The result is uneven adoption. Some teams integrate AI deeply into workflows. Others barely touch it. Over time, performance gaps emerge that leadership struggles to explain.

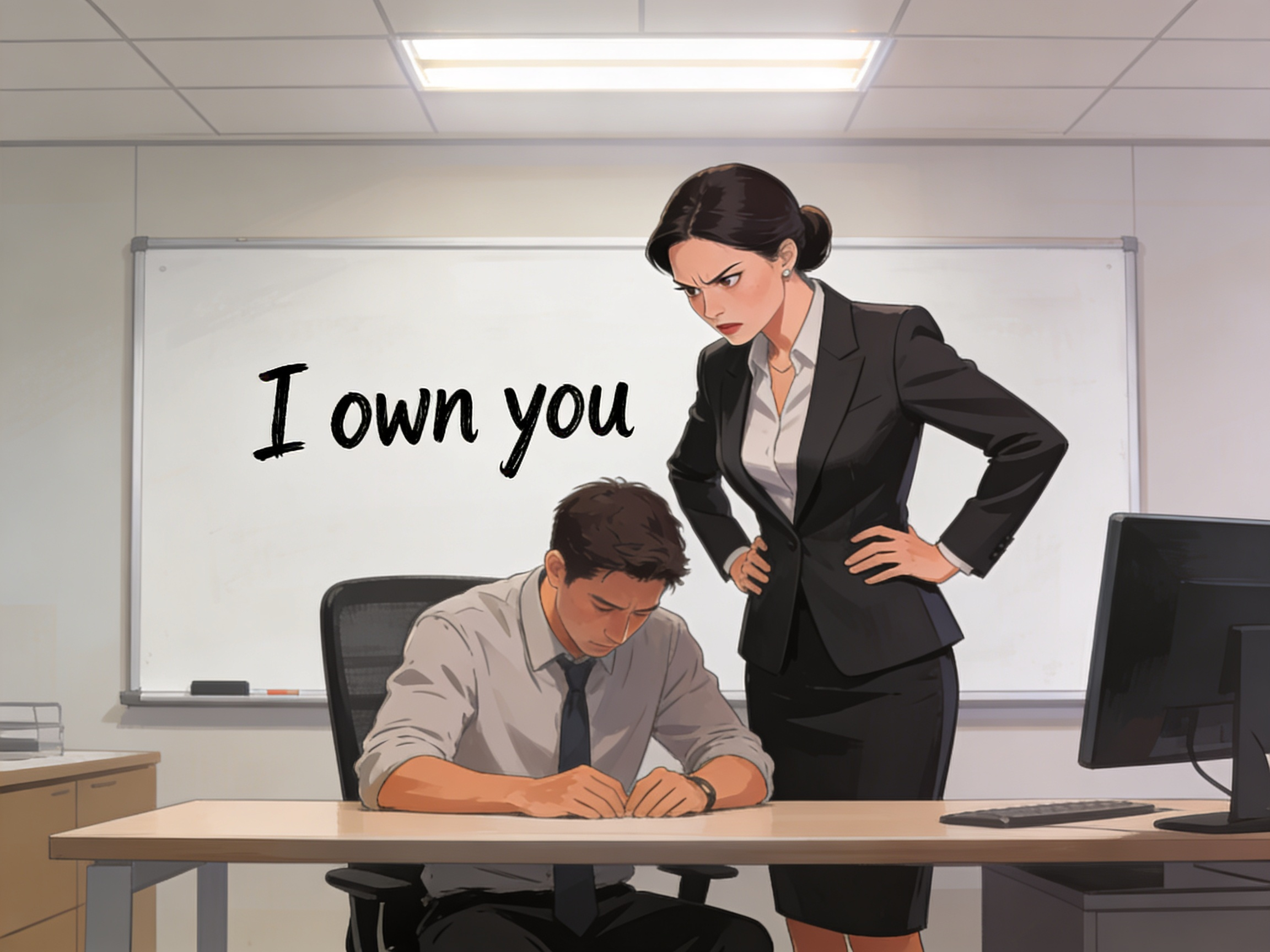

This creates a new kind of inequality at work—one that is rarely discussed openly. Employees who lack AI fluency may be perceived as less capable, even when their core skills remain strong. Managers may unintentionally reward outputs enabled by tools rather than underlying judgment or experience. Promotions and opportunities begin correlating with tool usage, not just competence.

There is also a psychological dimension. Employees comfortable with AI tend to experiment more, take initiative, and feel in control of change. Those who are uncertain often feel threatened, disengaged, or resistant—not because they oppose progress, but because they lack confidence. Over time, this can harden into cultural fault lines within teams: early adopters versus silent sceptics, movers versus maintainers.

Crucially, AI literacy is not about coding or technical depth. It is about practical fluency—knowing what tools exist, how to ask the right questions, how to validate outputs, and when to rely on judgment instead of automation. It is about understanding limitations as much as possibilities. In that sense, AI literacy is closer to critical thinking than technical skill.

Organisations often underestimate this distinction. Training efforts focus on tool demos rather than thinking frameworks. Employees learn what buttons to press, but not when to use them—or when not to. Without shared norms, AI becomes an individual advantage rather than a collective capability.

This has long-term implications for trust and fairness. If AI quietly reshapes influence and progression, organisations risk creating opaque systems of advancement. Employees may feel left behind without understanding why. Performance conversations become harder when outcomes are shaped by invisible enablers rather than visible effort.

The solution is not to slow adoption, but to democratise literacy. Organisations that treat AI as optional experimentation will see divides widen. Those that treat it as a core workplace capability—like communication or analysis—can shape more equitable outcomes. This requires intentional design: shared learning, clear expectations, ethical guidelines, and space to question outputs rather than blindly accept them.

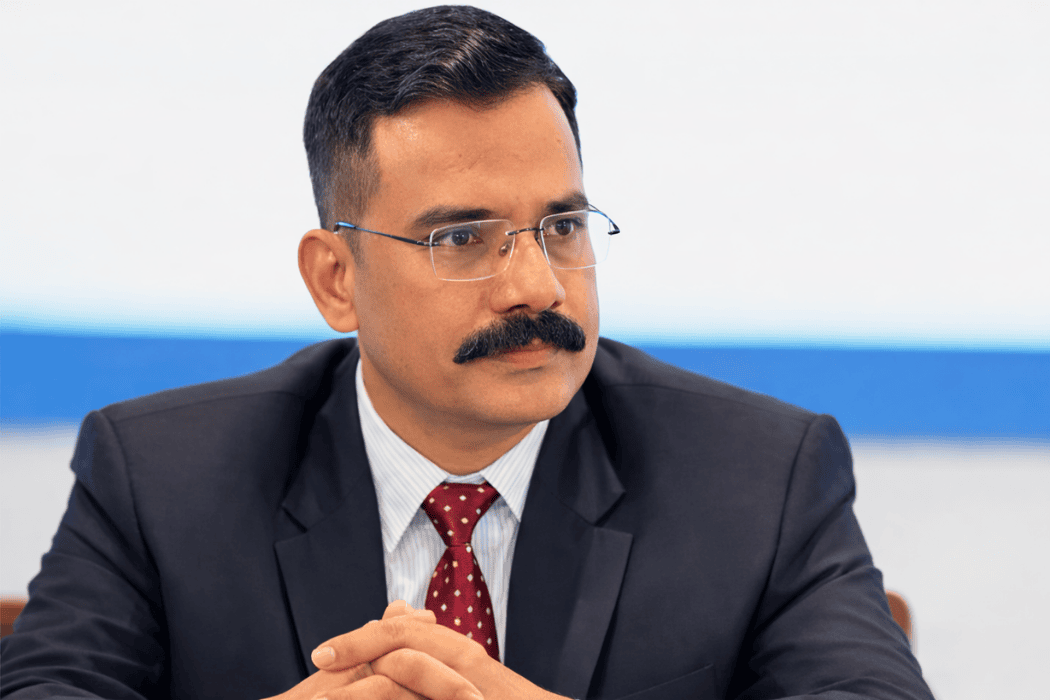

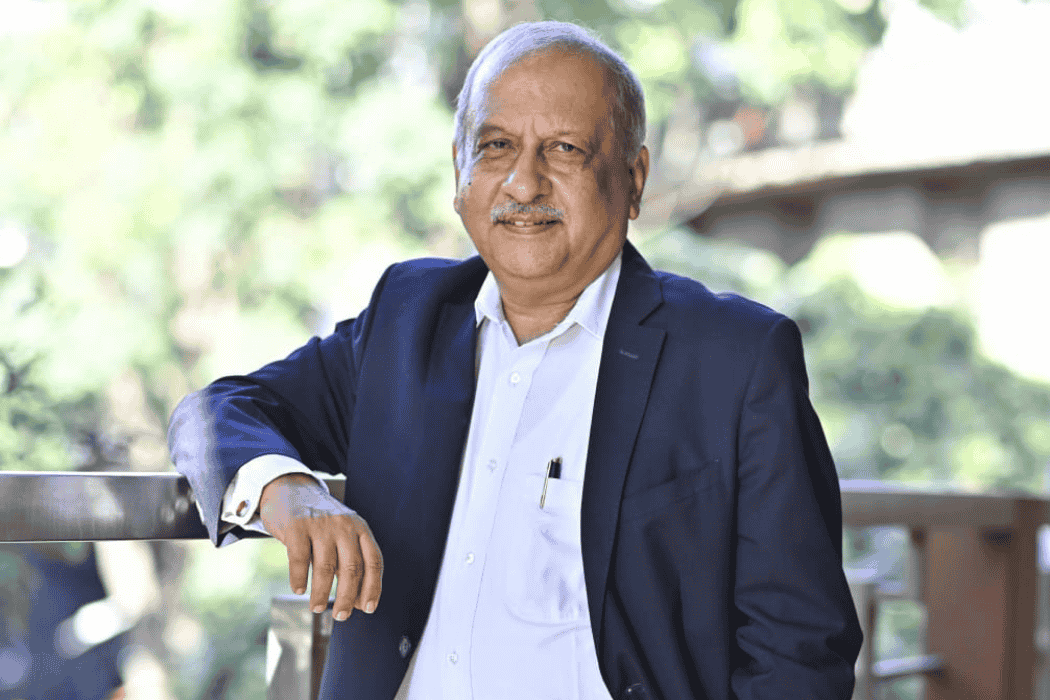

Leaders have a particular responsibility here. When leaders use AI openly—explaining how it informs decisions, where it helps, and where it falls short—they normalise learning and reduce fear. When they quietly rely on tools without transparency, they reinforce power asymmetries.

AI will not replace careers overnight. But it is already reshaping how value is created and recognised inside organisations. The real divide is not between humans and machines. It is between those who understand how to work with systems thoughtfully and those left to navigate change alone.

In the years ahead, AI literacy will likely become as fundamental as digital literacy once did. The organisations that recognise this early—and invest accordingly—will not only perform better. They will build cultures where progress feels shared, not uneven.

.jpg)