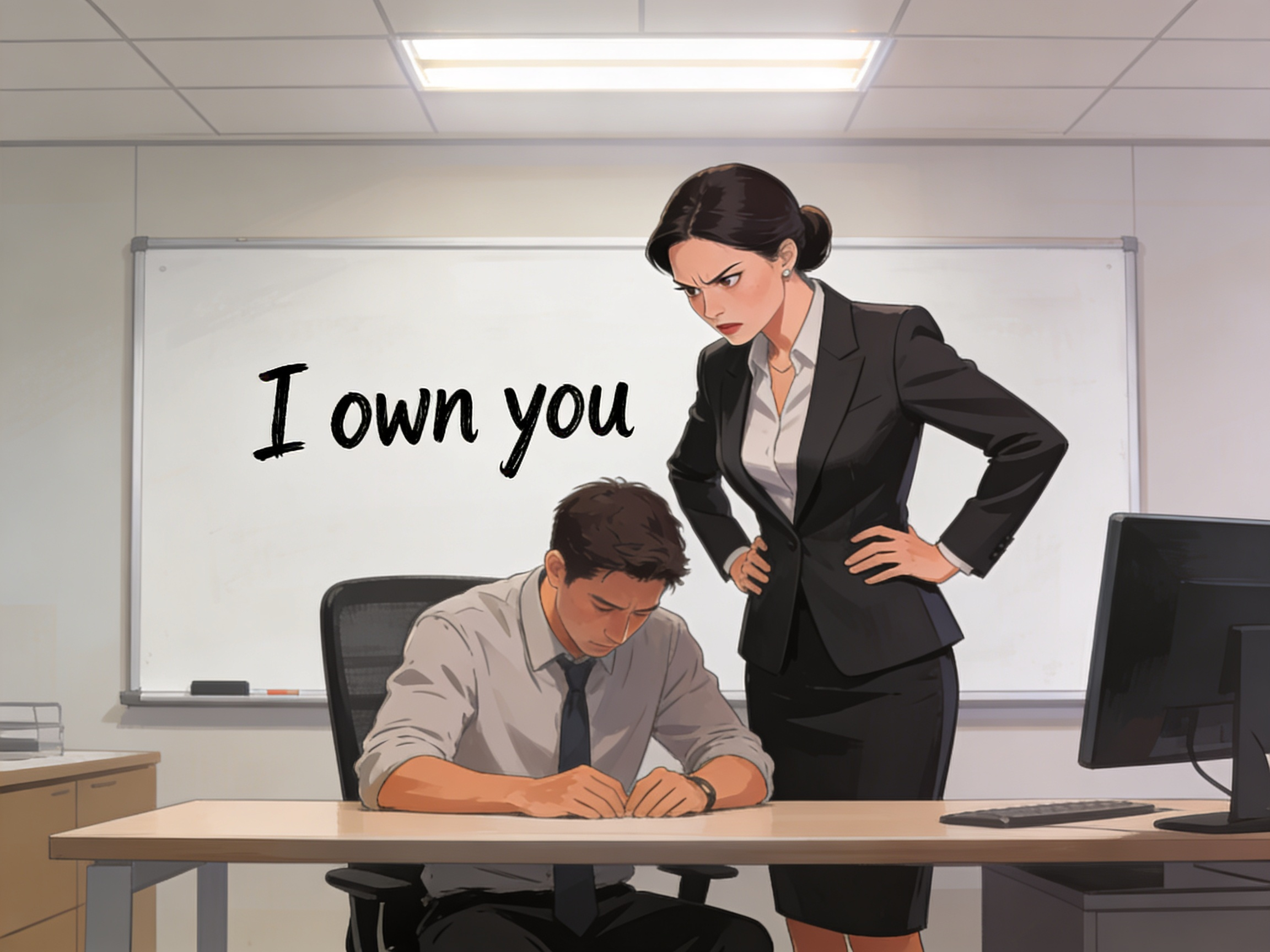

What makes this shift significant is not capability, but trust. Employees may disagree with a human manager, but they understand intent, bias, and context. With AI, decisions arrive without explanation—or worse, with explanations that sound objective but are deeply opaque. When an algorithm decides your work priority or performance ranking, pushing back feels harder. There is no face, no emotion, no room for negotiation.

This creates a subtle reordering of power inside organisations. Managers increasingly act as translators between systems and people, enforcing decisions they did not fully make. Employees begin optimising not for human approval, but for system logic—adjusting behaviour to please dashboards, metrics, and invisible thresholds. Over time, this reshapes culture. Initiative becomes compliance. Creativity becomes pattern-matching.

Startups feel this shift earlier. With lean teams and high pressure, founders rely heavily on AI-driven insights to make fast calls. In such environments, AI doesn’t replace managers—it becomes the manager’s backbone. Corporates, meanwhile, adopt the same tools under the language of efficiency and consistency, often without acknowledging how deeply decision-making is being outsourced.

The question organisations are avoiding is not whether AI can manage, but whether it should. Management is not only about optimisation; it is about judgment, empathy, and accountability. When those qualities are filtered through systems designed for scale, something gets lost.

The next phase of work will not be about humans versus AI. It will be about who sets direction, who explains decisions, and who takes responsibility when systems shape outcomes. Organisations that treat AI as an advisor rather than an authority may preserve trust. Those that quietly allow it to manage by default may find culture eroding long before performance does.

.jpg)